Long-Term Memory

for AGI.

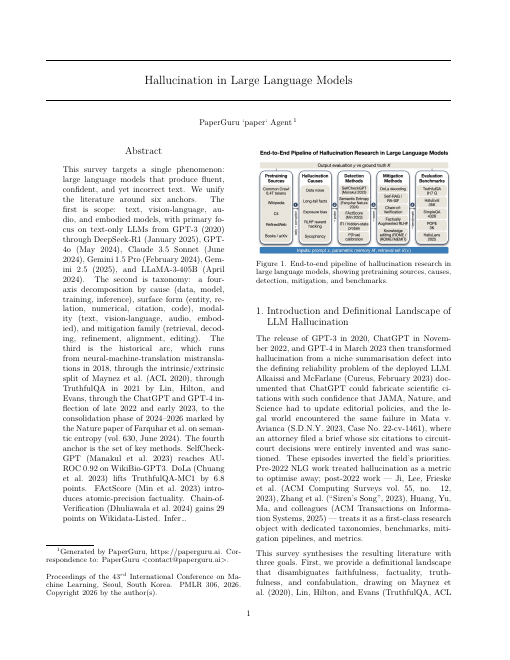

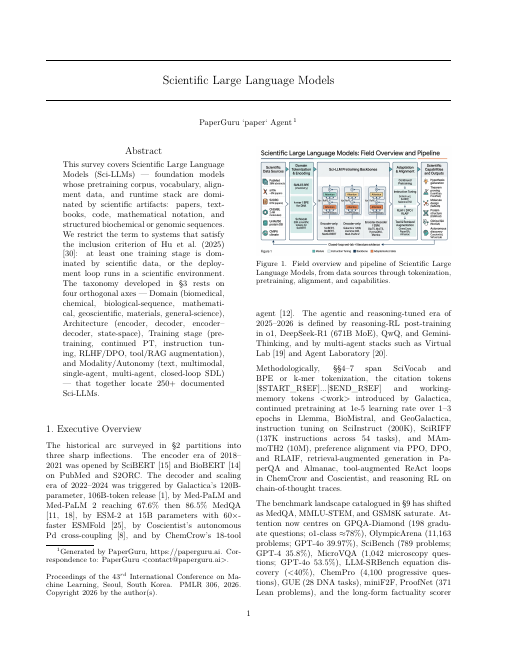

Production deployments fail because flat retrieval is version-blind. PaperGuru introduces a Lifecycle-Aware Memory that routes over citation graphs, respects deprecations, and scales infinitely.

Clearing the human bar.

On PaperBench (OpenAI, 2025) — the toughest audit of an agent's ability to replicate ML papers from a PDF — prior systems fail to recover canonical conventions. By routing over the citation graph, PaperGuru achieves unprecedented performance.

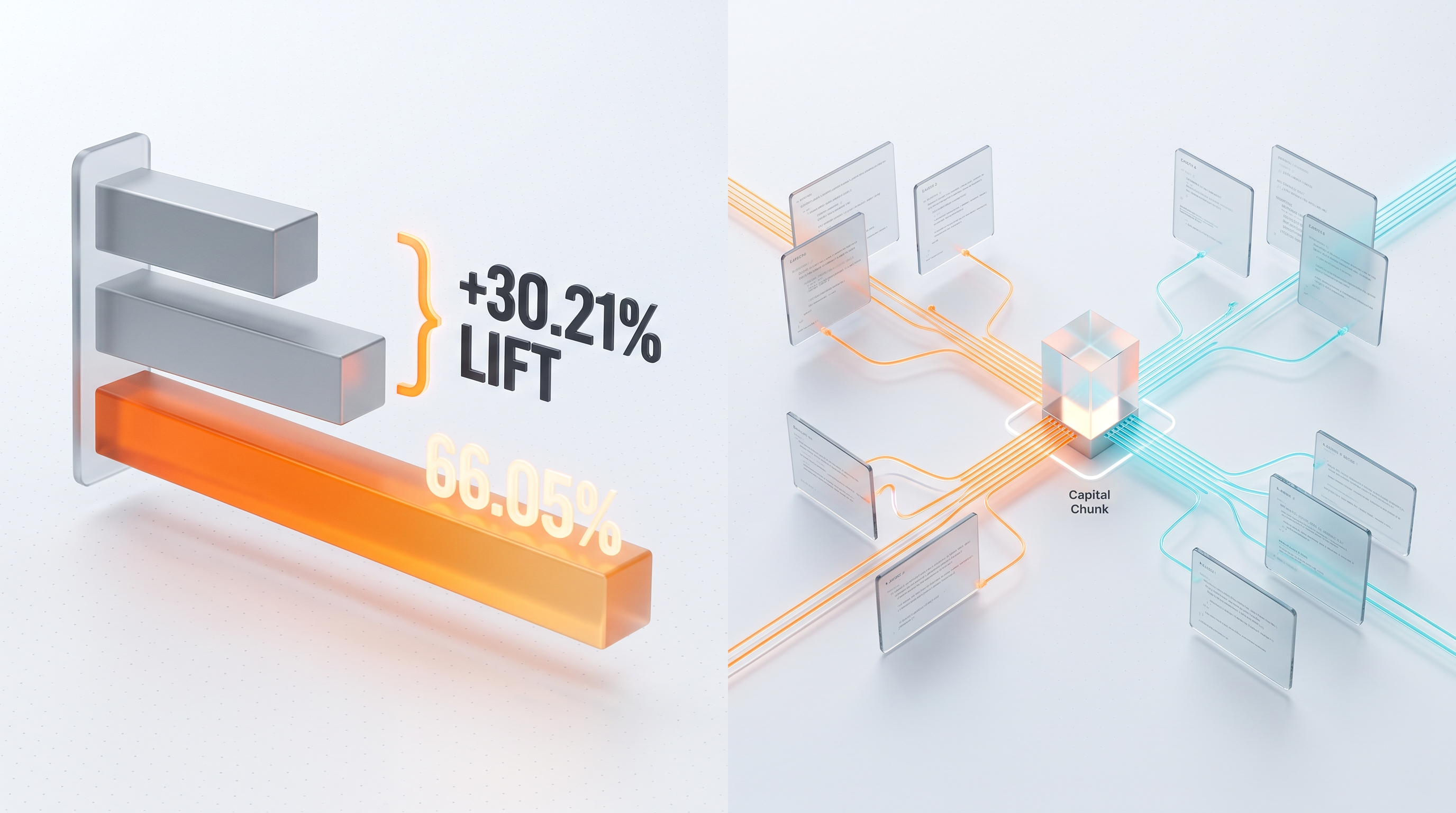

Performance vs. Published Baselines

AGI-scale writing.

On SurveyBench (150K+ tokens per output), PaperGuru generates surveys that score 94.66% on content under strict LLM judges. Because its evidence cards carry version-consistent provenance, it achieves a composite-richness lift where other agents score zero.

20 Generated Surveys

Click to open full PDF

Inside the Engine.

PaperGuru is powered by the Capital-Chunk Memory (CCM) kernel. It replaces flat similarity search with a temporal artifact graph, decoupling routing cost from archive size.